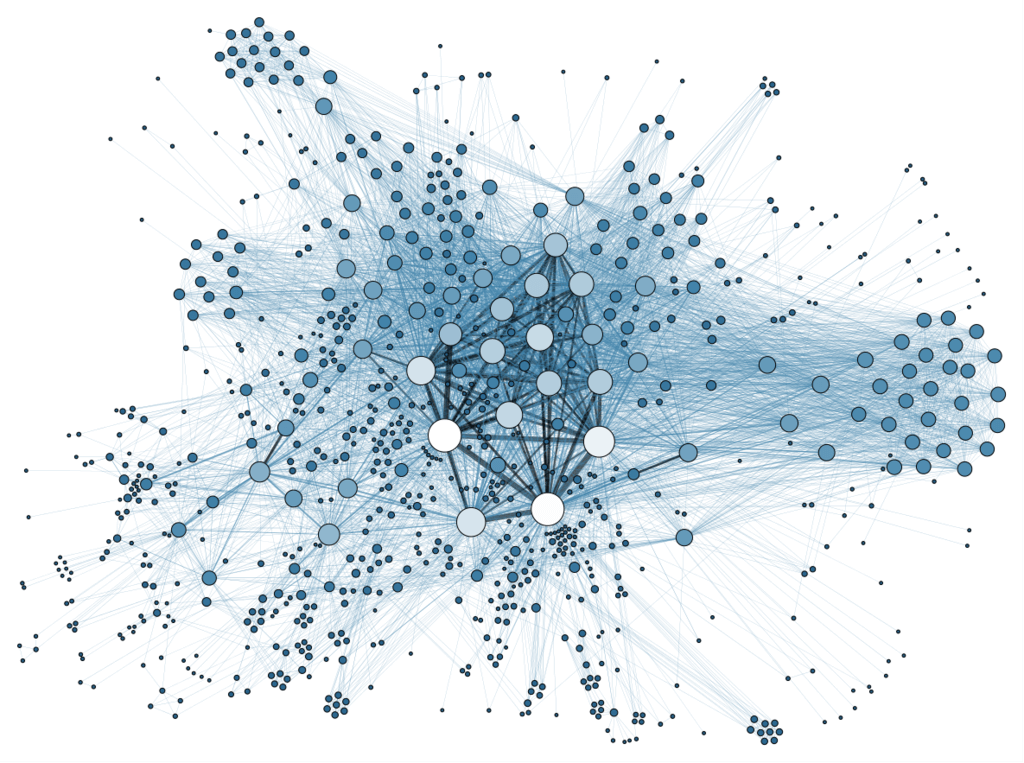

LangChain AI has an exciting feature that supports knowledge graph data represented as triplets structures using the RDF framework. This implementation provides two LLM-supported triplets operations: graph extraction and graph Q&A. By default, LangChain uses LLMs such as GPT-3, GPT-4 to extract knowledge triplets from text and store them in a NetworkX directed graph.

Tag Archives: langchain

Secrets Revealed: How LangChain’s Entity Memory Gives You Tailored Responses!

LangChain is a conversational AI framework that provides memory modules to help bots understand the context of a conversation. One of these modules is the Entity Memory, a more complex type of memory that extracts and summarizes entities from the conversation.

Unlock the Secret to Lifelike Chatbots: Conversational Memory Revealed!

By default, LLMs, Chains and Agents are stateless. They operate independently on each incoming query, without retaining any memory of previous interactions. However, in certain applications like chatbots, it is crucial to remember past conversations in both the short and long term. This is where the Memory feature comes into play.

LangChain: The Secret Sauce to Building Next-Level Language Applications

LangChain is a framework that revolves around large language models (LLMs). It enables the development of applications using LLMs for various purposes like chatbots, generative question-answering, summarization, and more.